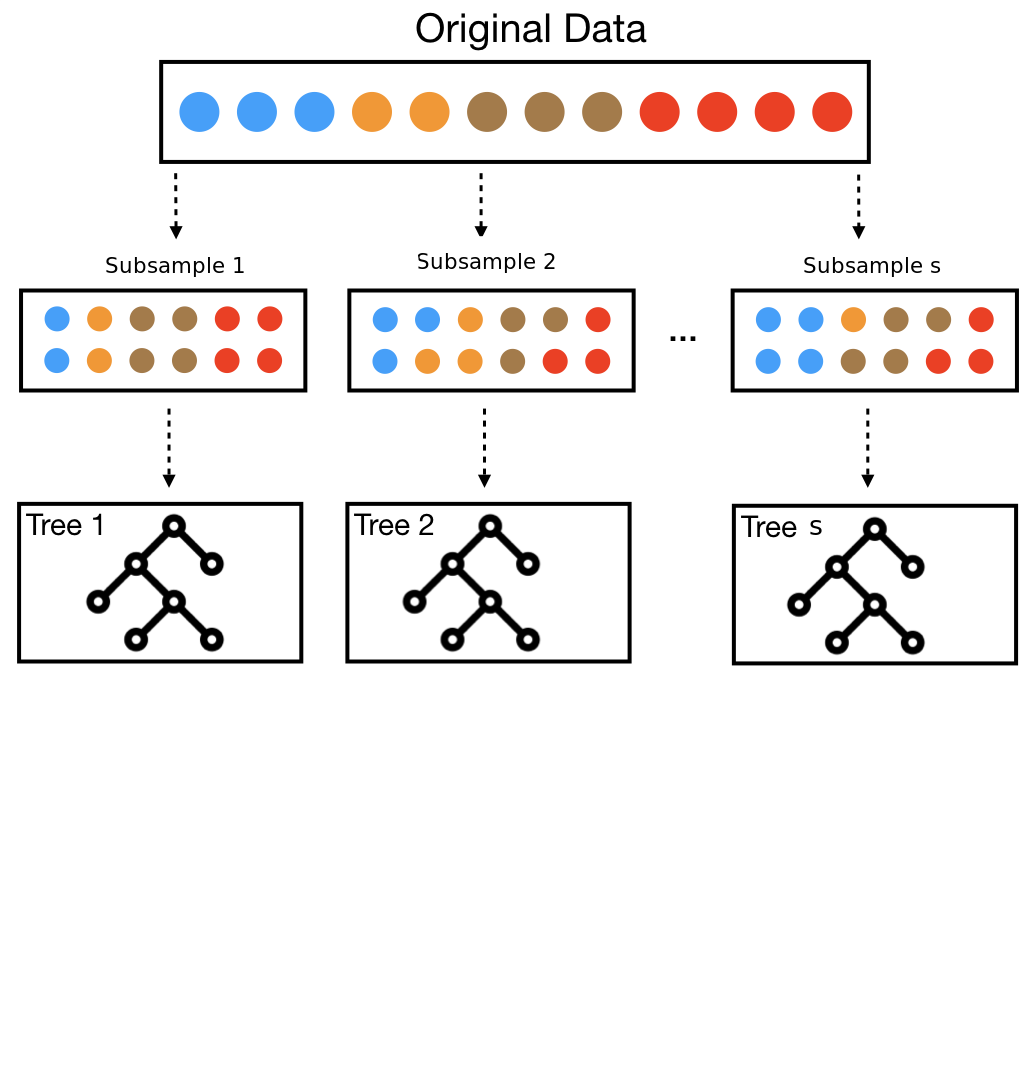

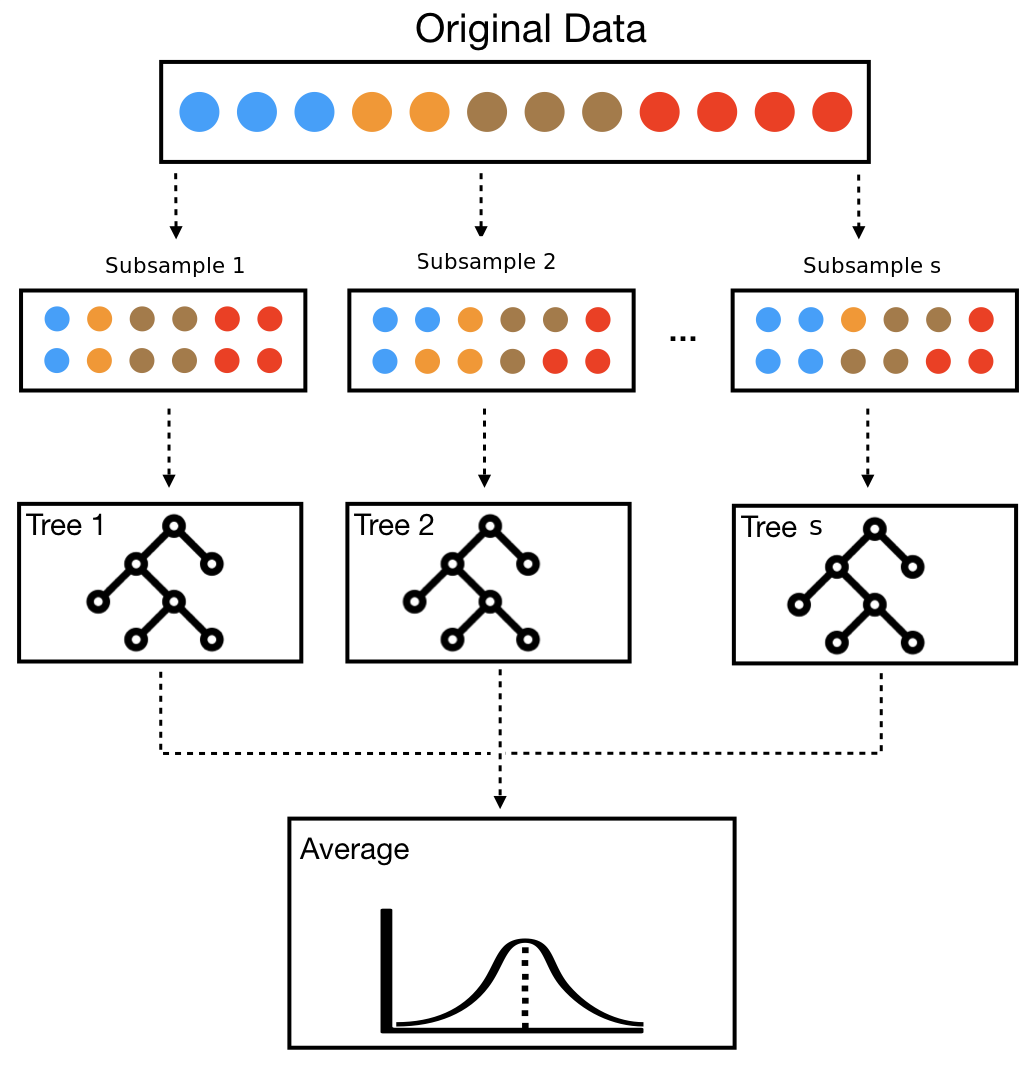

class: center, middle, inverse, title-slide # Causal Forests <img src='fig/FGV-Logo-3.png' width=250 style='float:right'/> ## for heterogeneous treatment effects <br> <hr> ### Rafael Felipe Bressan ### Sao Paulo School of Economics - EESP ### FGV - Fundação Getúlio Vargas ### March 8th, 2021 --- # Literature Review Development of Causal Forest comes from a lineage of papers: - <a name=cite-athey2016recursive></a>[Athey and Imbens (2016)](#bib-athey2016recursive) + Recursive partioning, honest trees -- - .bold[<a name=cite-wager2018estimation></a>[Wager and Athey (2018)](#bib-wager2018estimation)] + .bold[From trees to forests. Asymptotics] -- - <a name=cite-athey2019generalized></a>[Athey, Tibshirani, and Wager (2019)](#bib-athey2019generalized) + Trees as an adaptive kernel regression -- - <a name=cite-athey2019estimating></a>[Athey and Wager (2019)](#bib-athey2019estimating) + Application to observational data Other important results and applications - <a name=cite-wager2014confidence></a>[Wager, Hastie, and Efron (2014)](#bib-wager2014confidence) - Variance estimation - <a name=cite-oprescu2019orthogonal></a>[Oprescu, Syrgkanis, and Wu (2019)](#bib-oprescu2019orthogonal) - Orthogonal Forests - <a name=cite-davis2017using></a>[Davis and Heller (2017)](#bib-davis2017using) - Application to summer jobs --- # Motivation * ML methods perform well in practice, but many do not have well established asymptotics or confidence intervals -- * Causal Inference is .red[NOT] the same as prediction. + Fundamental problem of causal inference -- * Random Forests can potentially address several questions of interest: + Effect heterogeneity among subgroups + Robustness to model specification + Personalized estimates -- * Tackle high-dimensional problems --- # Potential outcomes framework We observe a set of i.i.d. units `\(i = 1, \ldots, n\)` whose tuples `\((X_i, Y_i, W_i)\)` are: - Feature **vector** `\(X_i\)`, - Outcome `\(Y_i \in \mathbb{R}\)` - Treatment assignement `\(W_i \in \{0, 1\}\)` -- The **conditional average treatment** effect is defined as: `$$\tau(x)=\mathbb{E}[Y_i^{(1)}-Y_i^{(0)}| X_i = x]$$` where `\(Y_i^{(0)}\)` and `\(Y_i^{(1)}\)` are the potential outcomes when not treated and treated respectively --- background-image: url(fig/magic.gif) background-size: cover # .red[Assumptions] .font150.center.white[ML is .bold[not dark magic]! We still need to make assumptions to infer the causal effect.] --- # .red[Assumptions] ML is .bold[not dark magic]! We still need to make assumptions to infer the causal effect. Unconfoundedness must hold: `$$\{Y_i^{(0)}, Y_i^{(1)}\}\perp W_i | X_i$$` -- All other usual causal inference assumptions: SUTVA, individualistic assignment, probabilistic assignment --- # Causal Trees Units on the same leaf `\(L(x)\)` can be understood as _matched neighbors_ Random forests are essentially an adaptive nearest neighbors estimation Given traditional causal .red[assumptions], we can treat nearby observations in X-space as having come from a randomized experiment Natural to estimate the treatment effect locally as: `$$\hat\tau(x)=\frac{1}{|S_1(x)|}\sum_{i\in S_1(x)}Y_i - \frac{1}{|S_0(x)|}\sum_{i\in S_0(x)}Y_i \qquad\qquad\text{(1)}$$` + `\(S_1(x)=\{i:W_i=1, X_i \in L(x)\}\)` and `\(S_0(x)=\{i:W_i=0, X_i \in L(x)\}\)` --- # From Trees to Random Forests .footnotesize[ .pull-left[ .bold[Original] training set `\(Z_i=\{(X_i, Y_i, W_i)\}_{i=1}^n\)` Set `\(\mathcal{B}\)` of trees and each tree `\(b\in \mathcal{B}\)` provides an estimation through a base learner `\(T\)` `$$\hat\tau_b(x)=T(x; Z_i^b)$$` ]] .pull-right[  ] --- # From Trees to Random Forests .footnotesize[ .pull-left[ .bold[Original] training set `\(Z_i=\{(X_i, Y_i, W_i)\}_{i=1}^n\)` Set `\(\mathcal{B}\)` of trees and each tree `\(b\in \mathcal{B}\)` provides an estimation through a base learner `\(T\)` `$$\hat\tau_b(x)=T(x; Z_i^b)$$` .bold[Random forest idea] - Subsampling (without replacement, .red[not bagging!]) - Random-split trees: randomly select only `\(m<p\)` features to split at each step - Different estimations: `\(T(x; Z_i^b) \neq T(x; Z_i^{b'})\)` ]] .pull-right[  ] --- # From Trees to Random Forests .footnotesize[ .pull-left[ .bold[Original] training set `\(Z_i=\{(X_i, Y_i, W_i)\}_{i=1}^n\)` Set `\(\mathcal{B}\)` of trees and each tree `\(b\in \mathcal{B}\)` provides an estimation through a base learner `\(T\)` `$$\hat\tau_b(x)=T(x; Z_i^b)$$` .bold[Random forest idea] - Subsampling (without replacement, .red[not bagging!]) - Random-split trees: randomly select only `\(m<p\)` features to split at each step - Different estimations: `\(T(x; Z_i^b) \neq T(x; Z_i^{b'})\)` .bold[Average] many different trees based on the same learner `$$\hat\tau(x)=\frac{1}{|\mathcal{B}|}\sum_{b\in\mathcal{B}}T(x; Z_i^b)$$` ]] .pull-right[  ] --- # Two Procedures .footnotesize[ .pull-left[ .blue[Double-sample trees] 1. Random subsample of size `\(s\)` from `\(\{1, \ldots, n\}\)` .bold[without replacement], then `\(|\mathcal{I}| = s/2\)` and `\(|\mathcal{J}| = s/2\)`. 2. Grow a tree via recursive partitioning. The splits are chosen using any data from the `\(\mathcal{J}\)` **and X or W observations** from the `\(\mathcal{I}\)`. Honesty. Minimum leaf size `\(k\)`. 3. Estimate leafwise responses using only the `\(\mathcal{I}\)`-sample observations. Double-sample causal trees the prediction estimated is `\(\hat\tau(x)\)` using (1) on the `\(\mathcal{I}\)`-sample. Following Athey and Imbens (2016), the splits of the tree are chosen by maximizing the variance of `\(\hat\tau(X_i)\)` for `\(i\in \mathcal{J}\)`. Each leaf of the tree must contain `\(k\)` or more `\(\mathcal{I}\)`-sample observations of **each** treatment class. ]] -- .footnotesize[ .pull-right[ .blue[Propensity trees] 1. Random subsample `\(\mathcal{I} \in \{1,\ldots, n\}\)` of size `\(|\mathcal{I}| = s\)` (no replacement). 2. Train a **classification** tree using sample `\(\mathcal{I}\)` where the response is the **treatment assignment**. Using only `\((X_i , W_i)\)` pairs with `\(i\in\mathcal{I}\)`. Each leaf of the tree must have `\(k\)` or more observations of each treatment class. 3. Estimate `\(\hat\tau(x)\)` using (1) on the leaf containing `\(x\)`. Propensity trees use only the treatment assignment indicator `\(W_i\)` to place splits, therefore, they are honest by design and save the responses `\(Y_i\)` for estimating `\(\hat\tau(x)\)`. ]] --- # Asymptotic Normality Regression .red[random forests] are asymptotically Gaussian if the following assumptions hold: - Honest, random-split, k-regular and symmetric tree (Definitions 2 to 5 of [Wager and Athey (2018)](#bib-wager2018estimation)) - Lipschitz continuous response, `\(\mu(x)=\mathbb{E}\left[Y|X=x\right]\)` - Subsample size `\(s_n\)`: `\(\displaystyle\lim_{n\rightarrow\infty} s_n = \infty\)` and `\(\displaystyle\lim_{n\rightarrow\infty} s_n\log(n)^d / n=0\)` - `\(\mathbb{E}\left[|Y-\mathbb{E}[Y|X=x]|^{2+\delta}|X=x\right]\leq M\)`, `\(\delta, M>0\)`, uniformly over all `\(x\in\left[0,1\right]^d\)` -- .bold[Theorem 3.4] [Wager and Athey (2018)](#bib-wager2018estimation) If above conditions hold, then there exists a sequence `\(\sigma_n(x)\rightarrow 0\)` such that `$$\frac{\hat\mu_n(x)-\mathbb{E}\left[\hat\mu_n(x)\right]}{\sigma_n(x)}\overset{d}{\rightarrow}\mathcal{N}(0,1)$$` --- # Asymptotic Variance Estimation Infinitesimal jacknife for .red[random forests]<sup>1</sup>. Define: - Response as `\(\hat\mu_b(x)\)` - Number of times `\(i-th\)` observation was used as `\(N_{bi}\)` - `\(Cov\)` as the covariance taken with respect to all trees in the forest .footnote[<sup>1</sup> See [Wager, Hastie, and Efron (2014)](#bib-wager2014confidence) for details.] -- Random Forest infinitesimal jacknife variance estimator `$$\widehat V_{IJ}(x)=\underbrace{\frac{n-1}{n}\left(\frac{n}{n-s}\right)^2}_{\text{finite sample adjustment}}\sum_{i=1}^n Cov\left(\hat\mu_b(x), N_{bi}\right)^2$$` --- # Asymptotic Variance .bold[Theorem 3.5] [Wager and Athey (2018)](#bib-wager2018estimation) Let `\(\hat V_{IJ}\)` be the infinitesimal jacknife for random forests. Then, under the conditons of Theorem 3.4, `$$\widehat V_{IJ}(x)/\sigma_n^2(x)\overset{p}{\rightarrow}1$$` -- Going from random forests to causal forests is trivial. Adapt definitions of honesty and regularity + A causal tree is honest if it does not use the responses `\(Y_1, \ldots, Y_s\)` to place its splits -- + A causal tree is `\(\alpha\)`-regular at `\(x\)` if (1) each split leaves at least a fraction `\(\alpha\)` of the available units on each side of the split, (2) `\(L(x)\)` has at least `\(k\)` observations for each treatment group, and (3) `\(L(x)\)` has either less than `\(2k − 1\)` observations with `\(W_i = 0\)` or `\(2k − 1\)` observations with `\(W_i = 1\)` -- .bold[Theorem 4.1] [Wager and Athey (2018)](#bib-wager2018estimation) provides consistency, Gaussian asymptotics and infinitesimal jacknife variance estimator --- # Application [Athey and Wager (2019)](#bib-athey2019estimating) apply Causal Forests to investigate heterogeneity on the **National Study of Learning Mindsets** -- NSLM: U.S. public high schools. Evaluate the impact of a nudge-like intervention designed to instill students with a growth mindset<sup>2</sup> on student achievement NSLM was randomized, but there seems to be some selection effects Treat the data as coming from an observational study and .red[assume unconfoundedness] School **clusters** .footnote[<sup>2</sup> The belief that intelligence can be developed, aka _"there is still hope for me"_] --- # Questions to be answered 1. Was the mindset intervention effective in improving student achievement? 2. Was the effect of the intervention moderated by school level achievement (X2) or pre-existing mindset norms (X1)? In particular there are two competing hypotheses about how X2 moderates the effect of the intervention: Either it is largest in middle-achieving schools (a “Goldilocks effect”) or is decreasing in school-level achievement. 3. Do other covariates moderate treatment effects? --- # Was the intervention effective? The authors use a modified version of Causal Forests. Random Forests as an adaptive kernel method with dobly-robust causal effect estimation. **Generalized random forests**, ([Athey, Tibshirani, and Wager, 2019](#bib-athey2019generalized)) -- ```r # Causal Forests cf <- causal_forest(X[, select_idx], Y, W, Y.hat = Y_hat, W.hat = W_hat, clusters = school_id, equalize.cluster.weights = TRUE, tune.parameters = "all") average_treatment_effect(cf) ## estimate std.err ## 0.24720417 0.02068655 ``` --- # Treatment heterogeneity <div class="figure" style="text-align: center"> <img src="CausalForest_files/figure-html/box-plot-1.png" alt="Treatment effect heterogeneity" width="80%" /> <p class="caption">Treatment effect heterogeneity</p> </div> --- # Do other covariates moderate the effect? Once we have a .red[Causal Forest] fit, we can visualize a specific tree <img src="fig/tree1.png" width="80%" style="display: block; margin: auto;" /> --- # Do other covariates moderate the effect? Once we have a .red[Causal Forest] fit, we can visualize a specific tree <img src="fig/tree2.png" width="80%" style="display: block; margin: auto;" /> --- # Do other covariates moderate the effect? Once we have a .red[Causal Forest] fit, we can visualize a specific tree Or, we can derive an assignment rule as a shallow tree, <a name=cite-AtheyWager2021policy></a>([Athey and Wager, 2021](#bib-AtheyWager2021policy)) <img src="fig/poltree.png" width="80%" style="display: block; margin: auto;" /> --- class: clear, center, middle background-image: url(fig/raising-hand.gif) background-size: cover <br><br><br><br><br><br><br><br><br><br><br><br><br> .font300.white.bold.shade[Questions?] --- # References .footnotesize[ <a name=bib-athey2016recursive></a>[Athey, S. and G. Imbens](#cite-athey2016recursive) (2016). "Recursive partitioning for heterogeneous causal effects". In: _Proceedings of the National Academy of Sciences_ 113.27, pp. 7353-7360. <a name=bib-athey2019generalized></a>[Athey, S., J. Tibshirani, and S. Wager](#cite-athey2019generalized) (2019). "Generalized random forests". In: _Annals of Statistics_ 47.2, pp. 1147-1178. <a name=bib-athey2019estimating></a>[Athey, S. and S. Wager](#cite-athey2019estimating) (2019). "Estimating treatment effects with causal forests: An application". In: _Observational Studies_ 5, pp. 21-35. <a name=bib-AtheyWager2021policy></a>[Athey, S. and S. Wager](#cite-AtheyWager2021policy) (2021). "Policy Learning With Observational Data". In: _Econometrica_ 89.1, pp. 133-161. <a name=bib-davis2017using></a>[Davis, J. and S. B. Heller](#cite-davis2017using) (2017). "Using causal forests to predict treatment heterogeneity: An application to summer jobs". In: _American Economic Review_ 107.5, pp. 546-50. <a name=bib-oprescu2019orthogonal></a>[Oprescu, M., V. Syrgkanis, and Z. S. Wu](#cite-oprescu2019orthogonal) (2019). "Orthogonal random forest for causal inference". In: _International Conference on Machine Learning_. PMLR. , pp. 4932-4941. <a name=bib-wager2018estimation></a>[Wager, S. and S. Athey](#cite-wager2018estimation) (2018). "Estimation and inference of heterogeneous treatment effects using random forests". In: _Journal of the American Statistical Association_ 113.523, pp. 1228-1242. <a name=bib-wager2014confidence></a>[Wager, S., T. Hastie, and B. Efron](#cite-wager2014confidence) (2014). "Confidence intervals for random forests: The jackknife and the infinitesimal jackknife". In: _The Journal of Machine Learning Research_ 15.1, pp. 1625-1651. ] --- # Appendix <table class=" lightable-classic" style='font-size: 16px; font-family: "Arial Narrow", "Source Sans Pro", sans-serif; width: auto !important; margin-left: auto; margin-right: auto;'> <thead> <tr> <th style="text-align:left;"> Variables </th> <th style="text-align:left;"> Description </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> S3 </td> <td style="text-align:left;"> Student’s self-reported expectations for success in the future, a proxy for prior achievement, measured prior to random assignment </td> </tr> <tr> <td style="text-align:left;"> C1 </td> <td style="text-align:left;"> Categorical variable for student race/ethnicity </td> </tr> <tr> <td style="text-align:left;"> C2 </td> <td style="text-align:left;"> Categorical variable for student identified gender </td> </tr> <tr> <td style="text-align:left;"> C3 </td> <td style="text-align:left;"> Categorical variable for student first-generation status, i.e. first in family to go to college </td> </tr> <tr> <td style="text-align:left;"> XC </td> <td style="text-align:left;"> School-level categorical variable for urbanicity of the school, i.e. rural, suburban, etc. </td> </tr> <tr> <td style="text-align:left;"> X1 </td> <td style="text-align:left;"> School-level mean of students’ fixed mindsets, reported prior to random assignment </td> </tr> <tr> <td style="text-align:left;"> X2 </td> <td style="text-align:left;"> School achievement level, as measured by test scores and college preparation for the previous 4 cohorts of students </td> </tr> <tr> <td style="text-align:left;"> X3 </td> <td style="text-align:left;"> School racial/ethnic minority composition, i.e., percentage of student body that is Black, Latino, or Native American </td> </tr> <tr> <td style="text-align:left;"> X4 </td> <td style="text-align:left;"> School poverty concentration, i.e., percentage of students who are from families whose incomes fall below the federal poverty line </td> </tr> <tr> <td style="text-align:left;"> X5 </td> <td style="text-align:left;"> School size, i.e., total number of students in all four grade levels in the school </td> </tr> <tr> <td style="text-align:left;"> Y </td> <td style="text-align:left;"> Post-treatment outcome, a continuous measure of achievement </td> </tr> <tr> <td style="text-align:left;"> Z </td> <td style="text-align:left;"> Treatment, i.e., receipt of the intervention </td> </tr> </tbody> </table> --- # Appendix - Resources .small[ This presentation is publicly available at my <svg xmlns="http://www.w3.org/2000/svg" viewBox="0 0 496 512" class="rfa" style="height:0.75em;fill:blue;position:relative;"><path d="M165.9 397.4c0 2-2.3 3.6-5.2 3.6-3.3.3-5.6-1.3-5.6-3.6 0-2 2.3-3.6 5.2-3.6 3-.3 5.6 1.3 5.6 3.6zm-31.1-4.5c-.7 2 1.3 4.3 4.3 4.9 2.6 1 5.6 0 6.2-2s-1.3-4.3-4.3-5.2c-2.6-.7-5.5.3-6.2 2.3zm44.2-1.7c-2.9.7-4.9 2.6-4.6 4.9.3 2 2.9 3.3 5.9 2.6 2.9-.7 4.9-2.6 4.6-4.6-.3-1.9-3-3.2-5.9-2.9zM244.8 8C106.1 8 0 113.3 0 252c0 110.9 69.8 205.8 169.5 239.2 12.8 2.3 17.3-5.6 17.3-12.1 0-6.2-.3-40.4-.3-61.4 0 0-70 15-84.7-29.8 0 0-11.4-29.1-27.8-36.6 0 0-22.9-15.7 1.6-15.4 0 0 24.9 2 38.6 25.8 21.9 38.6 58.6 27.5 72.9 20.9 2.3-16 8.8-27.1 16-33.7-55.9-6.2-112.3-14.3-112.3-110.5 0-27.5 7.6-41.3 23.6-58.9-2.6-6.5-11.1-33.3 2.6-67.9 20.9-6.5 69 27 69 27 20-5.6 41.5-8.5 62.8-8.5s42.8 2.9 62.8 8.5c0 0 48.1-33.6 69-27 13.7 34.7 5.2 61.4 2.6 67.9 16 17.7 25.8 31.5 25.8 58.9 0 96.5-58.9 104.2-114.8 110.5 9.2 7.9 17 22.9 17 46.4 0 33.7-.3 75.4-.3 83.6 0 6.5 4.6 14.4 17.3 12.1C428.2 457.8 496 362.9 496 252 496 113.3 383.5 8 244.8 8zM97.2 352.9c-1.3 1-1 3.3.7 5.2 1.6 1.6 3.9 2.3 5.2 1 1.3-1 1-3.3-.7-5.2-1.6-1.6-3.9-2.3-5.2-1zm-10.8-8.1c-.7 1.3.3 2.9 2.3 3.9 1.6 1 3.6.7 4.3-.7.7-1.3-.3-2.9-2.3-3.9-2-.6-3.6-.3-4.3.7zm32.4 35.6c-1.6 1.3-1 4.3 1.3 6.2 2.3 2.3 5.2 2.6 6.5 1 1.3-1.3.7-4.3-1.3-6.2-2.2-2.3-5.2-2.6-6.5-1zm-11.4-14.7c-1.6 1-1.6 3.6 0 5.9 1.6 2.3 4.3 3.3 5.6 2.3 1.6-1.3 1.6-3.9 0-6.2-1.4-2.3-4-3.3-5.6-2z"/></svg> Github repo [https://github.com/rfbressan/eesp_machine_learning](https://github.com/rfbressan/eesp_machine_learning) Other interesting resources and papers: - [EconML](https://econml.azurewebsites.net/): Python library for Machine Learning methods to causal inference - Chernozhukov, V., Chetverikov, D., Demirer, M., Duflo, E., Hansen, C., Newey, W., & Robins, J. (2018). Double/debiased machine learning for treatment and structural parameters. The Econometrics Journal, 21(1), C1-C68. - Chang, N. C. (2020). Double/debiased machine learning for difference-in-differences models. The Econometrics Journal, 23(2), 177-191. - [Rahul Singh, Liyang Sun. (2021). De-biased Machine Learning in Instrumental Variable Models for Treatment Effects. Unpublished Manuscript](http://economics.mit.edu/files/20420) - Syrgkanis, V., Lei, V., Oprescu, M., Hei, M., Battocchi, K., & Lewis, G. (2019). Machine learning estimation of heterogeneous treatment effects with instruments. arXiv preprint [arXiv:1905.10176](https://arxiv.org/abs/1905.10176). - Bressan, R. F. (2021). [INFERÊNCIA CAUSAL COM MACHINE LEARNING: uma aplicação para evasão fiscal](https://www.overleaf.com/read/njxdsjrtxqnm). Trabalho de conclusão de curso. PUC-MG. [Github](https://github.com/rfbressan/curso_big_data) ] --- # Appendix - Generalized Random Forests .small[ Adaptive kernel `$$\hat{\mu}(x)=\sum_{i=1}^{n} \alpha_{i}(x) Y_{i}, \quad \alpha_{i}(x)=\frac{1}{B} \sum_{b=1}^{B} \frac{\mathbf{1}\left(\left\{X_{i} \in L_{b}(x), i \in \mathcal{S}_{b}\right\}\right)}{\left|\left\{i: X_{i} \in L_{b}(x), i \in \mathcal{S}_{b}\right\}\right|}$$` Doubly-robust regression `$$\hat{\tau}=\frac{\sum_{i=1}^{n} \alpha_{i}(x)\left(Y_{i}-\hat{m}^{(-i)}\left(X_{i}\right)\right)\left(W_{i}-\hat{e}^{(-i)}\left(X_{i}\right)\right)}{\sum_{i=1}^{n} \alpha_{i}(x)\left(W_{i}-\hat{e}^{(-i)}\left(X_{i}\right)\right)^{2}}$$` Augmented inverse propensity weighting `$$\begin{array}{l} \hat{\tau}_{j}=\frac{1}{n_{j}} \sum_{\left\{i: A_{i}=j\right\}} \widehat{\Gamma}_{i}, \quad \hat{\tau}=\frac{1}{J} \sum_{j=1}^{J} \hat{\tau}_{j}, \quad \hat{\sigma}^{2}=\frac{1}{J(J-1)} \sum_{j=1}^{J}\left(\hat{\tau}_{j}-\hat{\tau}\right)^{2} \\ \widehat{\Gamma}_{i}=\hat{\tau}^{(-i)}\left(X_{i}\right)+\frac{W_{i}-\hat{e}^{(-i)}\left(X_{i}\right)}{\hat{e}^{(-i)}\left(X_{i}\right)\left(1-\hat{e}^{(-i)}\left(X_{i}\right)\right)}\left(Y_{i}-\hat{m}^{(-i)}\left(X_{i}\right)-\left(W_{i}-\hat{e}^{(-i)}\left(X_{i}\right)\right) \hat{\tau}^{(-i)}\left(X_{i}\right)\right) \end{array}$$` ]